Projects

My projects and research.

2026

MechaOctopus - Humanoid Robot Tutorials

Hands‑on Isaac Lab tutorials for humanoid robots - aiming to be one of the first practical, end‑to‑end guides. The first tutorial walks through creating and training a G1 humanoid standing task on flat terrain using the g1_stand extension, without touching Isaac Lab core.

ForteGrip: A Compact, High-Force, Backdrivable Parallel Gripper

Overview

Parallel grippers typically trade off compactness, stroke, force, and backdrivability. ForteGrip uses a collapsible rail architecture with load‑sharing crank arms so stroke can exceed folded width while sustaining high forces, suited to tight spaces and safe, compliant interaction. An inverted guide-rail layout folds into the body; a crank-slider linkage with roller bearings offloads the linear guide.

- Strong grasping and continuous‑force capability with high backdrivability vs compact commercial grippers.

- FEA‑backed structure: safe stresses and small deflections under worst‑case loading.

- Bench validation for backdrivability and grasp force; qualitative demos (delicate chips, deformable cup, heavy book).

Performance & demos

The scatter plot situates ForteGrip alongside representative commercial parallel grippers on folded geometry and force density; the qualitative strip shows everyday manipulation that stresses delicacy, compliance, and payload in one place.

Links

Teensy 4.1 Ethernet-UDP to CAN bridge for Robstride O2

Teaser

Three Robstride O2 motors on Can0 with a Teensy 4.1 spine board - PC control over Ethernet-UDP.

Overview

Robstride O2 actuators use a vendor 29‑bit CAN protocol; this project gives a PC a simple, predictable path to that bus. A Teensy 4.1 receives control batches over UDP, drives Can0, and only accepts the next batch after Type 2 feedback has arrived from every motor you configure (1-3 in a daisy chain). That round-based discipline keeps loop timing steady for logging, teleop, or downstream controllers.

The repository includes Teensy firmware, a C++ helper layer for O2 frames, a threaded host UDP client, CMake‑built PC demos (comm test, multi‑mode control including MIT payloads, multi‑motor runs), a set of smaller Teensy sketches for bring‑up and timing experiments, and a Python script to plot optional timing logs.

Methodology

Default path: host UDP to the Teensy (port 8003), static IPs on a small LAN (documented in the repo). Firmware parameters match your motor IDs and how many nodes are on the chain. The main PC demo exposes several control modes; optional streaming to a second UDP port records per‑motor timing for offline frequency and latency checks.

Highlights

- Ethernet-UDP ↔ CAN bridge on Teensy 4.1 with synchronized Type 2 feedback across up to three O2 motors.

- Host stack: CMake, C++14, Asio for UDP; shared client and protocol code reused across demos.

- Optional timing export plus Python plotting for validating control rate and latency.

2025

Reinforcement Learning for Quadruped Walking

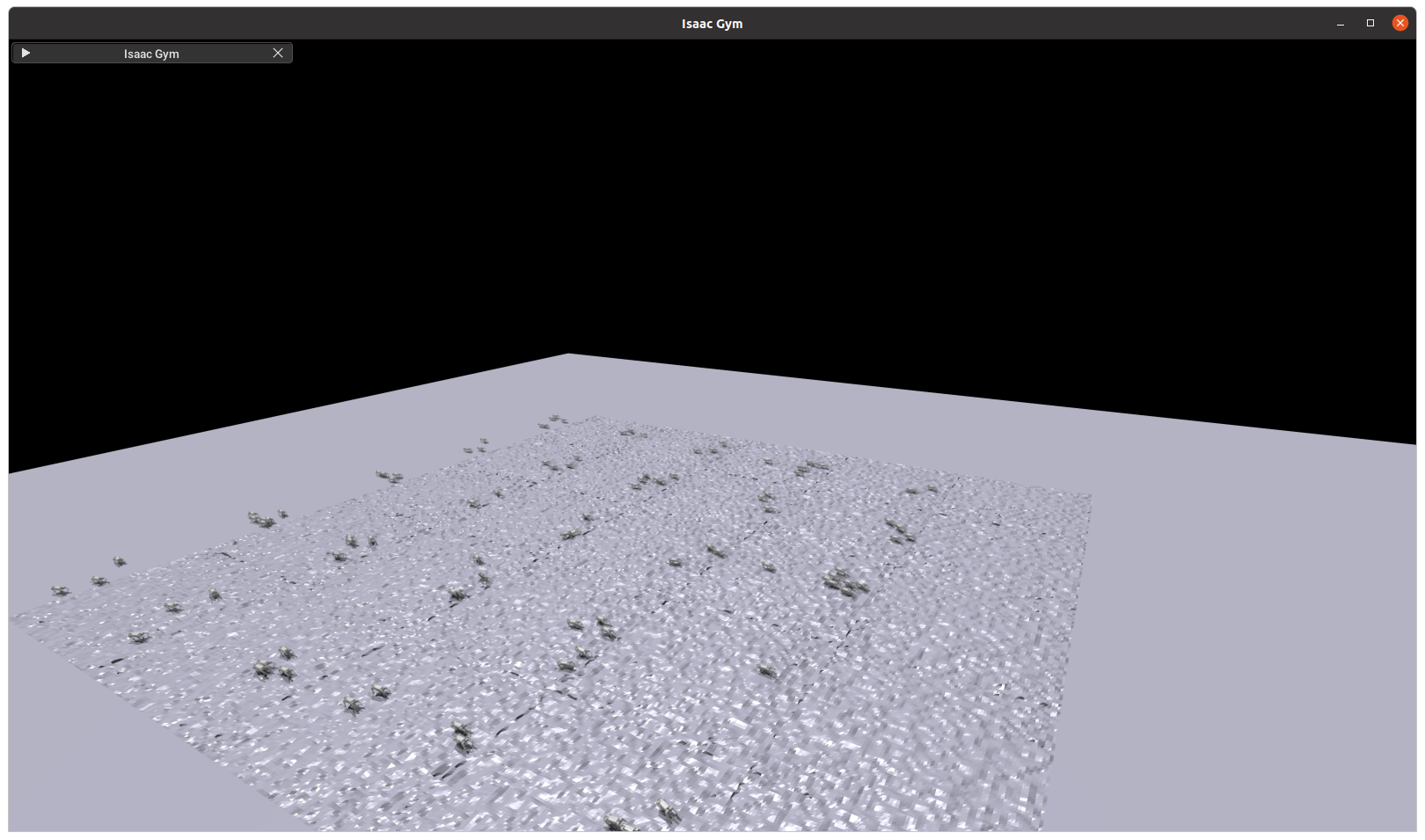

Teaser

Stable, symmetric walking in rough terrain (blind policy).

Overview

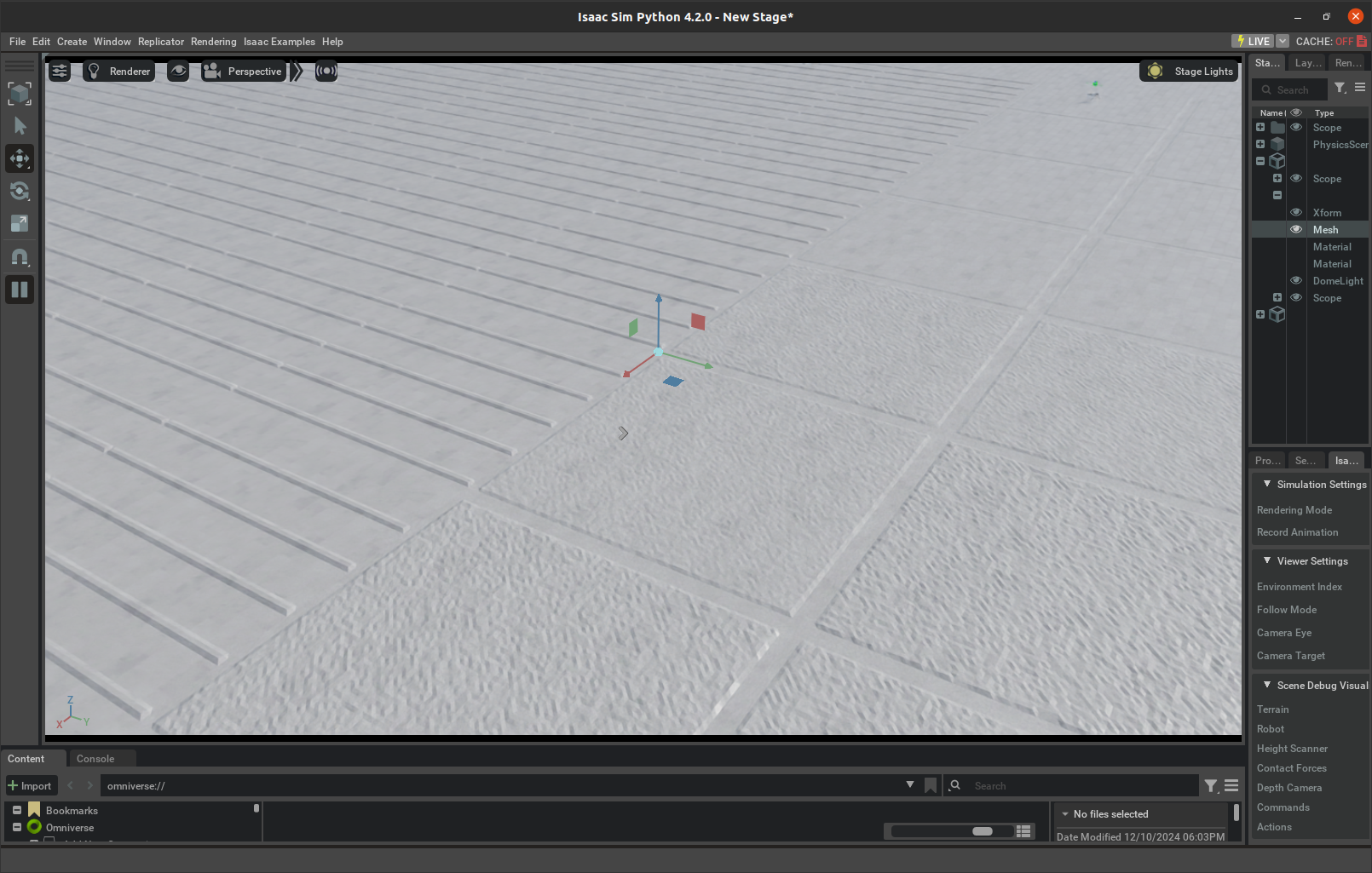

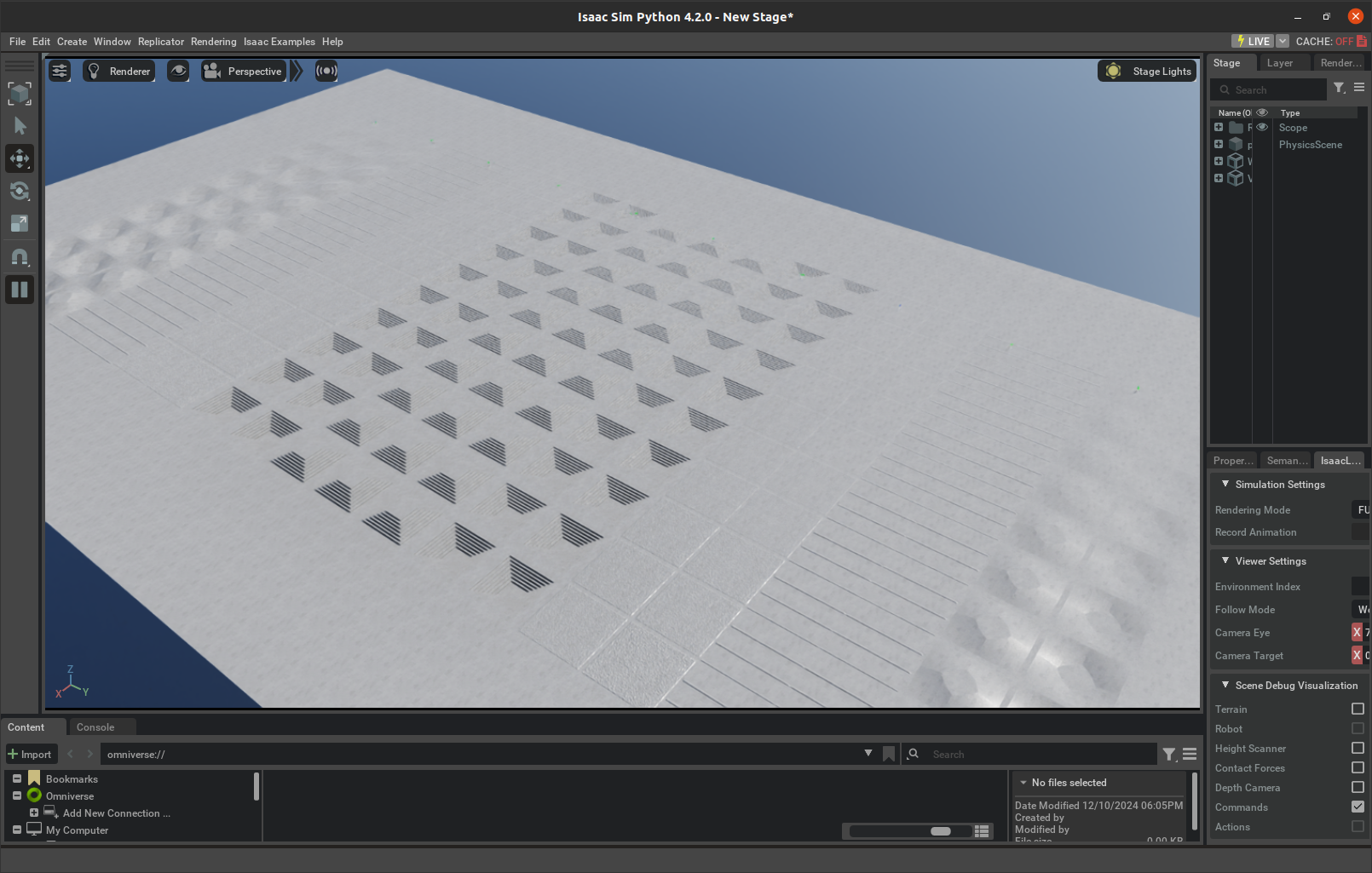

We study RL policies for elegant quadruped locomotion. By shaping training terrains and tuning rewards to favor long, low strides and consistent body height, we obtain more natural, symmetric gaits across flat and rough terrains. We also explore depth-camera perception to climb stairs and navigate obstacles.

Methodology

IsaacLab simulation with diverse terrains (flat, rough, rails, waves). Reward emphasizes velocity tracking, base height, and smooth actuation to discourage pronking. Trained with many parallel environments on GPU.

Walking Gaits Without Terrain Shaping

Flat-only training often induces pronking and irregular gaits. Terrain structure encourages more natural symmetry.

Final Results

Deployments on Unitree Go1 Edu

Links

Vision‑Based Human‑Following Robot

Overview

Implemented a vision‑based human‑following capability on the Triton mobile robot. Perception is powered by YOLOv5 running on a Jetson Nano with an Intel RealSense D435 RGB‑D camera, and the system supports both teleoperation and autonomous modes. Gesture‑based activation was integrated using MediaPipe to enable hands‑free control.

Methodology

The architecture comprises four modules: Human Recognition, Human Following, Gesture Recognition, and Obstacle Avoidance. On‑board RGB‑D and LiDAR provide target tracking and collision avoidance. An external webcam supplies gesture inputs that are fused into the control state machine for safe mode switching.

Results

- Human recognition: ≥ 85% accuracy under varied lighting and occlusion conditions.

- Human following: up to 1 m/s with ≤ 0.3 m mean distance error.

- Gesture recognition: ≥ 80% classification accuracy with ≤ 1.5 s end‑to‑end latency.

- Obstacle avoidance: ≥ 95% collision‑free trials in store‑like layouts.

Contributions

- Designed and implemented obstacle‑avoidance algorithms with LiDAR fusion and safety checks.

- Built the laptop‑to‑Triton communication bridge for remote control, telemetry, and logging.

- Implemented hand‑gesture recognition and integrated it with the control pipeline for mode selection.

Videos

ROS laptop‑robot connection test.

Hand‑gesture recognition and motion tests.

2023

Plastic Bottle Sorting with 6DOF Arm

End‑to‑end vision‑guided sorting of plastic bottles using a 6DOF arm. Real‑time perception, conveyor tracking, and a custom gripper enable high‑accuracy, high‑throughput pick‑and‑place on a moving line.

Results & Impact

- ≈98% detection and sorting accuracy on moving conveyor

- Reduced cycle time via optimized gripper geometry and grasp sequencing

- Robust to lighting/pose variation with real-time instance segmentation

- Higher throughput from stabilized pick-and-place and fewer false rejects

System Overview

Components: real‑time Mask R‑CNN for instance segmentation; belt/encoder synchronization and object tracking; motion planning with grasp sequencing; and a CAD‑designed end‑effector to improve grasp stability and tolerance to bottle variance.

Videos

Arm operation: calibration and sorting cycle (YouTube).

Robot and camera calibration steps (YouTube).

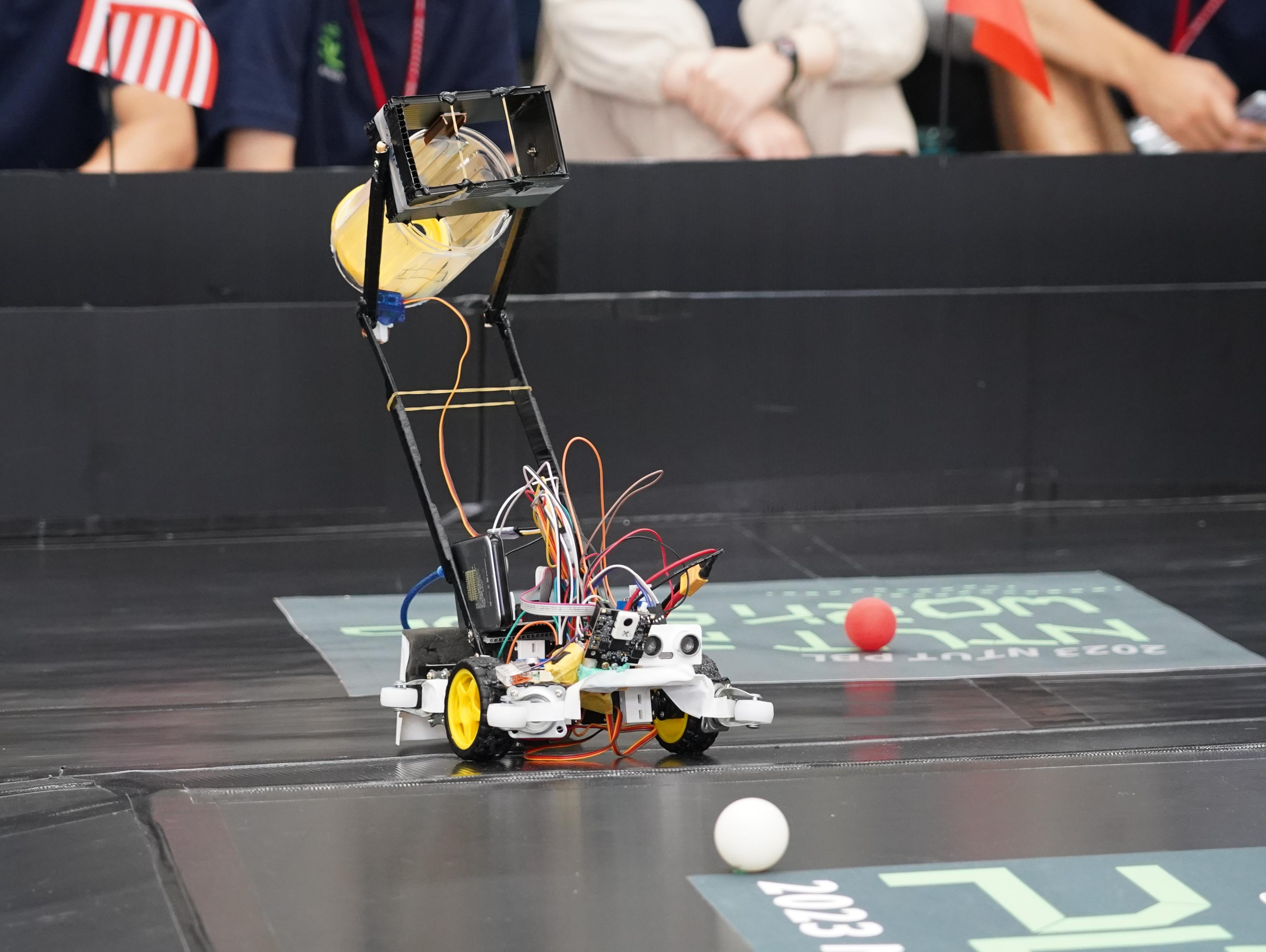

Ball‑Picking Robot (NTUT PBL - Second Prize)

Overview

Represented Ho Chi Minh City University of Technology (HCMUT) at the Project‑Based Learning program hosted by National Taipei University of Technology (NTUT). In a 10‑day sprint, our team built a ball‑picking robot and placed second overall.

Technical Highlights

- Designed an OpenCV vision pipeline to robustly detect red balls and reject distractors, improving selection accuracy by >25% vs early prototypes.

- Implemented an embedded C control stack to recognize targets, compute throw trajectories, and actuate the pick‑and‑throw mechanism autonomously.

- Coordinated integration and on‑site testing across a 10‑student team.

Media

Sources: YouTube workshop clip and Taipei Tech Post Issue 61.